Event Recap

Thank you to all those that attended.

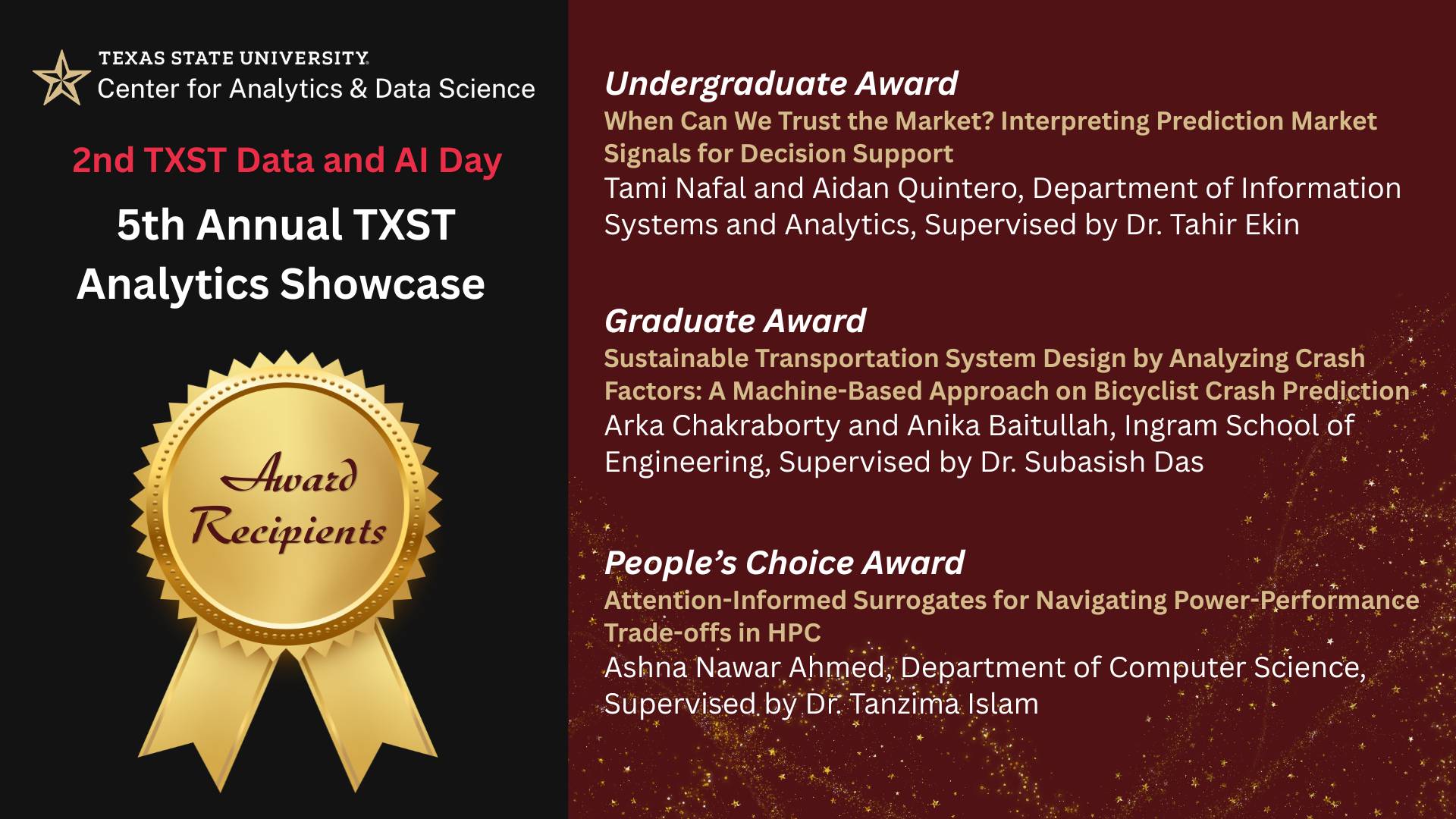

This April 2026, the Center hosted its 5th Annual Analytics Showcase as part of the 2nd TXST Data & AI Day, bringing together students, faculty, and industry professionals to celebrate innovation in data science, artificial intelligence, and analytics.

The event featured over 20 research posters, representing eight academic units across four colleges and guided by 14 faculty mentors. The Showcase highlighted the depth and diversity of student-led research at TXST, with projects spanning machine learning, high-performance computing, optimization, marketing analytics, and socially impactful applications such as transportation safety and fraud detection.

During the showcase, CADS also featured industry speakers and live demonstrations. Industry engagement was a key component of this year’s event, invited speakers Dr. Sean Guillory and Ram Prasad offered perspectives on emerging trends, workforce expectations, and the practical applications of analytics in industry settings. In addition, live demonstrations by Drs. Damian Valles and Rashik Shadman showcased developing drone technologies, with a hands-on look at physical AI systems. Attendees also engaged directly with students presenters through interactive poster sessions, gaining insight into both technical methodologies and real-world applications.

Projects explored timely topics such as AI’s influence on career confidence, predictive modeling for public safety, data quality assessment, and emerging technologies like drone-based systems. Across presentations, a common theme emerged: the importance of leveraging AI and data-driven tools not only as technological solutions, but as instruments for informed decision-making and societal advancement.

5th Annual Analytics Showcase

CADS is pleased to announce the 2nd TXST Data and AI Day featuring 5th Annual Analytics Showcase!

April 22, 2026 | Minifie Atrium (MCOY 434) | 10:30 AM- 1:00 PM

The Center for Analytics and Data Science (CADS) is pleased to announce the 2nd TXST Data and AI Day featuring 5th Annual Analytics Showcase, continuing our tradition of highlighting the innovative analytics, data science, and AI‑driven work emerging across Texas State. Building on the success of last year’s combined inaugural Data & AI Day and 4th Analytics Showcase, which featured industry speakers, student posters, and interdisciplinary panels, this year’s event will once again bring together our campus community for a celebration of applied analytics and AI.

Don't miss this opportunity to gain valuable insights, network with industry leaders, and expand your understanding of data and AI's role in shaping the future of diverse industries. Whether you're a student exploring career options, or simply passionate about the transformative potential of analytics and artificial intelligence, this showcase promises to be an inspiring event. This year, we have 20+ posters featuring data and AI research of more than 25 students across many departments.

Agenda

- 10:30-10:45 AM: Registration, Poster Setup, and Early Viewing

- 10:55 AM: Opening Remarks & Drone Demo

- 11:00 AM: Industry Session: Prediction Markets ft. Sean Guillory, BetBreakingNews

- 11:30 AM: Industry Session: Human-First AI System Design ft. Ram Prasad, Insight2Impact

- 12:00 PM: 5th TXST Analytics Showcase Poster Session and Reception

- 12:55 PM: Poster Awards Ceremony

Featured Posters

| Title | Abstract | Author(s) | Faculty Advisors | Department | Level |

|---|---|---|---|---|---|

Title Data Twin: Proactive Data Quality Risk Assessment for Loan Applications | Abstract As organizations increasingly rely on digital systems to serve large populations, understanding how user behavior changes at scale has become critical for effective system design and decision-making. The DataTwin project explores how analytics can be used to create a digital representation of population behavior, allowing analysts to model and simulate how individuals collectively interact with a system under varying levels of participation. Using multiple datasets representing different population sizes alongside a large reference population, this project examines how behavioral patterns evolve as scale increases. Through exploratory data analysis and visualization, the study identifies trends in participation, interaction patterns, and system response that emerge when small-scale behavior is expanded to larger populations. The goal of the DataTwin project is to demonstrate how digital twin concepts can be applied to anticipate real-world system performance before full deployment. By modeling behavioral patterns at scale, the project provides decision-makers with insights that support improved planning, system efficiency, and more informed design choices in environments where large populations interact with digital platforms. | Author(s) Aarya Chapagain and Tami Nafal | Faculty Advisors Dr. Tahir Ekin | Department Information Systems and Analytics | Level Undergraduate |

Title FraudSphere: Analyzing Detector Agreement and Reliability in Coordinated Fraud | Abstract Multi-agent fraud detection systems are widely used in healthcare, insurance, finance, and government to support high-stakes decisions. These systems combine multiple analytical agents, such as anomaly detectors, rule-based models, and pattern-matching algorithms, that operate in parallel. A common assumption is that errors made by different detectors are independent. Under this assumption, agreement among detectors is often interpreted as increased confidence. However, high confidence does not always mean a decision is correct, especially in adversarial fraud settings. In real-world fraud, actors often coordinate their behavior, reuse stories, and exploit shared weaknesses in detection systems. This coordination can cause multiple detectors to fail in similar ways, leading to overconfident and misleading system outputs. This project examines how coordinated fraud behavior affects the accuracy, confidence, and calibration of multi-agent fraud detection systems. Using synthetic data generated by the FraudSphere simulation framework, we compare system behavior under independent and coordinated fraud scenarios, controlled by a coordination strength parameter. We analyze detector outputs to measure dependence and study how simple aggregation methods may misrepresent confidence as coordination increases. We then demonstrate interpretable, dependence-aware calibration adjustments, such as estimating an effective number of independent detectors, that improve confidence alignment without adding model complexity. Rather than focusing on improving detection accuracy, this work emphasizes interpretability and responsible use of analytics. The results highlight the need for dependence-aware reasoning and reinforce the role of human-in-the-loop decision support in fraud detection systems. | Author(s) Aidan Quintero and Aarya Chapagain | Faculty Advisors Dr. Tahir Ekin | Department Information Systems and Analytics | Level Undergraduate |

Title A Signed Graph Approach to Prison Social Network Analysis | Abstract " Prison risk assessments are traditionally manual, static, and focused on individuals, overlooking group dynamics. This project uses de- identified data from a large Texas correctional agency to apply signed graph methods and community detection to prison social networks, aiming to identify subgroups that may indicate gang involvement among unlabeled inmates. From about one million records, we build an undirected signed graph on male inmates where nodes are individuals and edges represent housing proximity (positive) or incident co-involvement (negative). The cell-only graph includes 65,122 nodes and 111,931 edges (102,245 positive; 9,686 negative), and GraphC community detection uncovers 15 distinct communities that reveal latent subgroup structures and support scalable, data- driven gang risk assessment beyond manual methods. " | Author(s) Chloe Jones and Sim Heesu | Faculty Advisors Dr. Jelena Tešić | Department Computer Science | Level Undergraduate |

Title Adolescent Suicidal Ideation Prediction: Integrating Statistical and Machine Learning Approaches (48 x 36) | Abstract "Suicide remains one of the leading causes of death among adolescents globally, yet empirical evidence on mental health risk factors among ethnic-racial minority youth remains limited. This study investigates risk factors of suicidal ideation among Korean-Chinese adolescents using both statistical and machine learning techniques. Data was collected from survey responses of 267 Korean-Chinese adolescents recruited from bilingual secondary schools in Northeast China. Binary logistic regression was conducted in IBM SPSS to identify significant psychosocial predictors of suicidal ideation. Results indicated that despair significantly predicted suicidal ideation, with each one-unit increase associated with a 116% increase in the odds of suicidal ideation. Parent-child conflict and gender-based peer teasing also emerged as significant positive predictors, while parental promotion of mistrust unexpectedly showed a significant negative association. Next, a logistic regression machine learning model was implemented in Python using a 70/30 train-test split. The model achieved 55% accuracy and 64% recall in identifying adolescents experiencing suicidal ideation. Using both statistical methods and machine learning techniques in our study allowed us to identify associations between risk factors and suicidal ideation, and also to predict high-risk adolescents. Findings highlight opportunities for targeted interventions addressing familial and peer-related stressors to reduce suicide risk among adolescents." | Author(s) Ishal Dogar | Faculty Advisors Dr. Yishan Shen | Department OUR HDFS REU (ARC Lab) | Level Undergraduate |

Title Probabilistic Reliability Assessment for Human-Labeled Data: A Time-Study Application | Abstract "Interrater reliability is a fundamental data quality concern in data science applications that rely on human judgment, including time studies. Existing reliability methods vary in whether they capture agreement, consistency, or both. However, they typically reduce reliability to single coefficient-based summaries, limiting their ability to fully characterize rater behavior. Coefficients such as Krippendorff’s alpha and ICC remain widely used and provide important benchmarks. In this study, we compare these traditional measures with a proposed probabilistic method to highlight their complementary perspectives on reliability. A multi-rater time-study dataset was analyzed using distributional exploration, empirical CDF comparisons, and bootstrap resampling to evaluate each rater’s central tendency and measurement uncertainty. These analyses revealed meaningful differences in both average performance and variability across raters, with bootstrap confidence intervals highlighting uncertainty structures that coefficient-based methods do not explicitly capture. To model reliability directly, we developed a probabilistic framework that uses a multivariate lognormal representation of rater measurements, reflecting the positive-valued and skewed nature of time data. This model enables Monte Carlo estimation of interpretable reliability quantities, including probabilities of the form P(Φ_i > Φ_j) and confidence intervals for pairwise differences, allowing both consistency and agreement to be quantified within a unified structure." | Author(s) Mahjabeen Mustafa | Faculty Advisors Dr. Francis A Mendez | Department Information Systems and Analytics | Level Undergraduate |

Title The Graduation Rates during the Pandemic | Abstract Our research centers on the relationship between socioeconomic status and graduation rates. Utilizing data from SEDA and EdFacts, we evaluate socioeconomic status and learning outcomes for students graduating in 2019. Our intention is to receive an accurate characterization of these student trajectories prior to the events of the COVID pandemic, which rattled education across the globe. Using the SEDA data, we evaluate the standardized test scores and socioeconomic status for each district, this is tracked to the 8th grade cohort size and achievement for the 2019 graduating class, meaning we are evaluating their outcomes from the SEDA dataset in 2014. Through a merge process, we can evaluate these statistics in comparison to the EdFacts data on cohort size and graduation rate. This will allow us to track how factors such as prior achievement, socioeconomic status, and cohort size affected eventual graduation rates. When the EdFacts data is released for the graduation classes from 2020 onwards, we can then compare these statistics again to measure any potential changes in graduation rate and track disparities between districts, socioeconomic status, and cohort size | Author(s) Nathan Tadeusz Villarreal, Vee Primo, Krystyne Powell, and Taylor Tate | Faculty Advisors | Department Economics and Finance | Level Undergraduate |

Title Benchmarking Deep Learning Training Workloads on the LEAP2 HPC System: CPU vs. GPU Performance Characterization | Abstract "The growing adoption of deep learning in research demands a clear understanding of what institutional High Performance Computing systems can deliver. This study benchmarks the LEAP2 system at Texas State University by training three architecturally diverse models on both the CPU and GPU partitions using platform-optimized configurations: ResNet- 50 (convolutional neural network), BERT (transformer for natural language processing), and Vision Transformer (transformer for computer vision). Performance is evaluated through, computational efficiency (GFLOPS), and computation time breakdown. GPU speedup varied substantially by architecture, with ResNet-50 achieving 19.5x, BERT achieving 16.9x, and ViT achieving 4.8x. Time breakdown analysis shows the GPU's advantage comes primarily from parallelizing matrix-heavy operations, with CNN workloads benefiting most. These findings characterize LEAP2's capabilities for AI researchers and inform resource planning for deep learning workflows." | Author(s) Prashant Panta | Faculty Advisors Dr. Damian Valles | Department Computer Science | Level Undergraduate |

Title Developing Artificial Intelligence Models for Predicting Stress in Cattle Behavior | Abstract Livestock naturally experience stress during production, but distress negatively impacts welfare and represents a significant economic cost to producers. Manual observation of stress is not always practical and may be invasive. In recent years, artificial intelligence (AI) and computer vison systems have been implemented in precision livestock farming (PLF) settings to automate livestock monitoring and identify certain behaviors animals display in natural settings. The objective of our research is to further develop an existing AI model to detect pastured cattle and classify behaviors that indicate stress or deviations from baseline conditions. Procedures involving animal subjects were approved by the Texas State Institutional Animal Care Use Committee (#9298). Static and moving cameras collected footage of cattle on pasture. Raw footage was clipped into 20-30 second clips using VideoLan and exported to Computer Vision Annotation Tool (CVAT), where individual cattle were identified and annotated with their corresponding behavior, with an emphasis on “stress response”. You Only Look Once (YOLO) was then used by processing the annotated data to identify and predict stress. Annotated data was split into two segments: training and validation. By refining existing AI models to predict stress conditions in livestock, we can contribute to technologies that advance animal welfare. | Author(s) Susan Ledezma | Faculty Advisors Dr. Merritt Drewery, Dr. Damian Valles | Department Agricultural Sciences | Level Undergraduate |

Title When Can We Trust the Market? Interpreting Prediction Market Signals for Decision Support | Abstract Prediction markets aggregate participant beliefs through trading activity to produce probability-like signals about future events. As regulatory clarity, commercial partnerships, and media visibility increase, these markets are moving from niche forecasting tools into mainstream analytical conversations. Universities and research institutions are also adopting prediction markets as experimental platforms for studying collective judgment and supporting decision- making under uncertainty. Despite their appeal, prediction market probabilities should not be treated as definitive answers. Market prices reflect trading behavior as much as information, and their reliability depends on conditions such as participation, liquidity, and concentration of capital. In thin or experimental markets, these factors can distort signals, creating a false sense of precision and confidence. This project examines how analytics methods can be used to evaluate the reliability of prediction market signals, particularly in university-based or experimental settings. Using simulated prediction market data representing both normal and degraded market behavior, the analysis explores relationships among prices, trading volume, volatility, and liquidity to identify conditions under which market signals may be less informative. Rather than labeling markets as manipulated or trustworthy, the project focuses on producing interpretable indicators that help analysts contextualize probabilities. The goal is to support human judgment by clarifying when market signals deserve confidence and when caution is warranted, enabling more responsible use of prediction markets in decision-support environments. | Author(s) Tami Nafal and Aidan Quintero | Faculty Advisors Dr. Tahir Ekin | Department Information Systems and Analytics | Level Undergraduate |

Title Enhancing Machine Learning Accessibility through Conversational AI and Robust Full-Stack Architecture | Abstract "This study describes how a machine learning deployment has shifted to a scalable and full-production web application, starting as a static web wrapper. The project has high modularity and better performance thanks to the replacement of a classic Python Streamlit interface with a ReactJS frontend and a Django REST API back-end. The main innovation is a chatbot powered by LLM which substitutes the traditional input forms with a chat interface. This intelligent collector uses natural language understanding to request, clarify and validate user information to guarantee high data fidelity to the underlying ML model. The frontend is based on the MVVM (Model-View-ViewModel) design pattern to ensure a very clean separation of concerns and extremely responsive user experience. A set of automated testing scripts was also in place to verify the API endpoints and system integration in order to provide system reliability. This architectural change illustrates that modern web architecture and conversational artificial intelligence can be a major enhancement of the usability and stability of research-focused machine learning systems, which would enable more complex models to become more available to non-technical users." | Author(s) Yugesh Bhattarai | Faculty Advisors Dr. Tanzima Islam | Department Computer Science | Level Undergraduate |

Title Who Sees Pedestrian and Bicyclist Safety as a Problem? Evidence from National Survey Data | Abstract Pedestrian and bicyclist safety remains a persistent public concern, yet most studies treat perceptions of safety as uniform or examine isolated factors, offering limited insight into how these perceptions vary across population groups. This study addresses that gap by analyzing responses to a nationally representative survey question that asks whether pedestrian and bicyclist safety is viewed as a “major problem,” “minor problem,” or “not a problem.” The objective is to identify how these perceptions are structured across demographic, geographic, and socioeconomic subgroups. Descriptive statistics and chi-square tests are used to screen variables, followed by association rule mining (ARM) to uncover interaction-based subgroup patterns. The results show that perceptions of safety are not evenly distributed but systematically structured: “major problem” responses are more prevalent in urban and specific demographic–socioeconomic combinations, while “minor” and “not a problem” responses are more common in rural and non-metropolitan subgroups. These patterns indicate that perceived safety is shaped by interacting with population characteristics rather than single factor effect. By moving beyond variable-level analysis to subgroup-level patterns, the study provides an account of how safety concerns are distributed across the population and offers a basis for more targeted pedestrian and bicyclist safety strategies. | Author(s) Anika Baitullah and Arka Chakraborty | Faculty Advisors Dr. Subasish Das | Department Civil Engineering | Level Graduate |

Title COMPASS: A Unified Decision-Intelligence System for Navigating Performance Trade-off in HPC | Abstract HPC systems expose many configuration parameters that jointly drive competing objectives. Existing tools, such as autotuners, recommend optimal configurations, but either cannot propose minimal adjustments when a configuration is close yet misses a target, or ignore system-specific rules. We introduce COMPASS—an AI engine for HPC configuration optimization over operational traces. This paper: (1) formalizes configuration questions into query patterns; (2) develops an interactive generative modeling engine that formulates these queries as ML tasks and recommends feasible configurations, estimates impacts of hypothetical changes, and proposes minimal fixes when configurations miss a goal; (3) explains decisions, quantifies uncertainty, and—when confidence is low—provides targeted guidance on which configurations to run next; and (4) validates COMPASS using analytical ground truth, reconstruction accuracy, and reproduction of published findings. Integrating COMPASS with a validated open-source HPC scheduling simulator reduces average job turnaround time by 65.93% and node usage by 80.93% over prior results. | Author(s) Ankur Lahiry | Faculty Advisors Dr. Tanzima Islam | Department Computer Science | Level Graduate |

Title AI Perceptions and Career Confidence Among TXST Students | Abstract "As AI-powered career tools are used more and more across professional platforms, understanding how college students perceive and emotionally respond to these technologies has become a strategic priority for companies like LinkedIn. This study examines the psychological and behavioral dimensions of AI adoption among 138 Texas State University students, investigating how AI-related attitudes shape career confidence and hiring beliefs. Grounded in the Technology Acceptance Model (TAM) and Career Decision Self-Efficacy (CDSE) theory, we measured five constructs. A linear multiple regression revealed that AI employability belief (β = .477, p < .001) and AI job-replacement anxiety (β = −.172, p = .021) together explained 27.8% of variance in career confidence. A binary logistic regression further demonstrated that students who believed AI fluency enhanced their employability were over five times more likely to view AI as a hiring advantage (OR = 5.161, p < .001), with 74.6% overall classification accuracy. These findings suggest that cultivating AI employability belief is an impactful lever for reducing career anxiety and increasing engagement with AI-powered career services among college students." | Author(s) Anna Kaic and Pamela Vilchez Cordonero | Faculty Advisors Dr. Jeremy Sierra | Department Marketing | Level Graduate |

Title Sustainable Transportation System Design by Analyzing Crash Factors: A Machine based Approach on Bicyclist Crash Prediction | Abstract Bicyclist crashes pose a major safety concern, as cyclists face much higher risk of fatal and serious injury than vehicle occupants. This study uses interpretable machine learning to predict bicyclist crash severity in Texas and to identify key risk factors that can guide safety interventions. A dataset of 2,979 crashes with 14 roadway, environmental, behavioral, and demographic features is modeled using a unified pipeline with one-hot encoding and oversampling for class imbalance. A Random Forest classifier achieves the best performance with an accuracy of 0.8792 and balanced precision, recall, and F1 score. Class-specific SHAP analysis has revealed that collision dynamics, especially straight-moving motor vehicles, are the dominant drivers of severity, while rural settings, winter conditions, and helmet nonuse increase the likelihood of KA and BC outcomes. The results provide data-driven guidance for protected infrastructure, speed management, and helmet promotion to support a safer, more sustainable transportation system. | Author(s) Arka Chakraborty and Anika Baitullah | Faculty Advisors Dr. Subasish Das | Department Ingram School of Engineering | Level Graduate |

Title Attention-Informed Surrogates for Navigating Power-Performance Trade-offs in HPC | Abstract High-Performance Computing (HPC) schedulers must balance user performance with facility-wide resource constraints. The task boils down to selecting the optimal number of nodes for a given job. We present a surrogate-assisted multi-objective Bayesian optimization (MOBO) framework to automate this complex decision. Our core hypothesis is that surrogate models informed by attention-based embeddings of job telemetry can capture performance dynamics more effectively than standard regression techniques. We pair this with an intelligent sample acquisition strategy to ensure the approach is data-efficient. On two production HPC datasets, our embedding-informed method consistently identified higher-quality Pareto fronts of runtime-power trade-offs compared to baselines. Furthermore, our intelligent data sampling strategy drastically reduced training costs while improving the stability of the results. To our knowledge, this is the first work to successfully apply embedding-informed surrogates in a MOBO framework to the HPC scheduling problem, jointly optimizing for performance and power on production workloads. | Author(s) Ashna Nawar Ahmed | Faculty Advisors Dr. Tanzima Islam | Department Computer Science (Per4ML Lab) | Level Graduate |

Title Robust Classification of Imbalanced, Noisy Environmental Data Using Weighted Relaxed Support Vector Machines for Contaminant Monitoring | Abstract "Environmental and energy monitoring systems often produce highly imbalanced datasets, where true contamination or high-risk events are rare and measurements are corrupted by noise, sensor drift, or labeling errors. Standard classifiers and simple cost-sensitive approaches can become biased toward the majority (background) class or overly influenced by outliers, leading to unreliable detection and risk assessment. Our work focuses on Weighted Relaxed Support Vector Machines (WRSVM) as a robust classification framework specifically designed for such conditions. Prior work on support vector machines for imbalanced and noisy data has explored class-weighted SVMs, relaxed SVMs with global slack budgets, and fuzzy SVMs with sample-level weights, but these approaches either amplify minority outliers or do not provide a principled mechanism for class-specific outlier control. Building on this literature, our study combines class-dependent penalties with class-specific free slack budgets to jointly address class imbalance and outlier contamination within a single optimization model. We have developed and analyzed an exact quadratic programming (QP) formulation of WRSVM for small and moderate problem sizes and introduced a Sequential Minimal Optimization (SMO)–based heuristic specifically for large-scale WRSVM instances where the full QP becomes computationally or memory prohibitive. The framework is evaluated on benchmark tabular datasets, a gas sensor array in open sampling settings (wind-tunnel chemical releases), and an Industrial vs Residential Air Quality classification dataset derived from ambient pollutant measurements. Across severe imbalance ratios and injected label noise, WRSVM consistently attains high geometric mean (G-mean), demonstrating robust performance for both minority and majority classes. On datasets up to 3000 samples and higher-dimensional sensor data, the SMO-based solver produces WRSVM solutions with G-mean close to the exact QP formulation while enabling training on instances where the exact solver fails to scale. Moreover, the class-specific free slack allocation naturally highlights atypical samples, providing built-in outlier detection without additional preprocessing or resampling. Overall, this study demonstrates that WRSVM with an efficient SMO solver is a practical and robust tool for classification tasks in environmental sensing and contaminant monitoring." | Author(s) Imtiajur Rahman | Faculty Advisors | Department Industrial Egineering | Level Graduate |

Title Adversarial Resilience of Transformer Architectures: Evidence from LLaMA and FLAN-T5 | Abstract "Large language models (LLMs) are increasingly deployed in decision-support settings where robustness to adversarial input is critical. While prior work has focused heavily on encoder-only models, less is known about how robustness varies across transformer architectures. The objective of this project is to evaluate and compare the adversarial resilience of encoder–decoder (FLAN-T5) and decoder-only (LLaMA 3 / 3.1) architectures, and to assess whether parameter-efficient fine-tuning improves robustness without sacrificing interpretability or clean performance. Methodologically, we fine-tune LLaMA-3.1-8B-Instruct using LoRA and QLoRA with the Unsloth framework and FLAN-T5-Large using instruction-based sequence-to-sequence training on the IMDB sentiment dataset. All models use a generation-as-classification paradigm for consistency. We evaluate performance under a comprehensive adversarial attack suite, including word-level (random noise, spelling, token splitting), sentence-level (insertion, deletion, shuffling), and semantic-level attacks (back-translation and summarization), across multiple corruption intensities . Results show that both architectures achieve strong clean accuracy (~96–97%), with FLAN-T5 demonstrating high stability under word-level perturbations and LLaMA showing greater resilience under moderate sentence-level attacks. However, both models experience substantial degradation under semantic transformations, particularly summarization, revealing architecture-specific failure modes . Parameter-efficient fine-tuning preserves clean performance while improving robustness, highlighting a favorable robustness–efficiency tradeoff. Overall, the project demonstrates that adversarial resilience is architecture-dependent and that proactive, lightweight defenses can materially improve robustness, supporting more responsible deployment of LLMs in high-stakes analytic environments." | Author(s) Krishna sai Mandadapu | Faculty Advisors Dr. Dr. Tahir Ekin, Dr. Lucian Visinescu | Department Information Systems and Analytics | Level Graduate |

Title PRECISION-Connect: AI-Ready Multimorbidity and SDOH Risk Vectors for Explainable 30-Day Readmission and County-Level Disparity Modeling | Abstract PRECISION-Connect is a population health analytics system for Medicare home health beneficiaries that integrates multimorbidity, frailty, utilization, and county-level social determinants of health (SDOH) into an AI-ready risk vector. Using over 440,000 Texas home health visits, it links visit-level clinical risk with county-level disparity indices to support explainable 30-day readmission prediction and disparity-aware county comparisons through an interactive clinician-facing dashboard. | Author(s) Mirna Elizondo and Chloe Jones | Faculty Advisors Dr. Jelena Tešić | Department Computer Science | Level Graduate |

Title Is Embedding Fusion a Viable Representation Learning Strategy for HPC Performance Data? | Abstract "High-performance computing (HPC) performance prediction is critical for optimizing application behavior across diverse workloads. Existing approaches predominantly rely on numerical hardware performance counters, which often fail to capture structural and semantic characteristics of programs. This work investigates whether combining information from multiple sources improves the prediction capability of downstream models. We consider heterogeneous data modalities, including tabular performance features (hardware counters and input parameters) and code-based representations derived from program structure. These sources differ significantly in format, ranging from vector-based inputs to structured representations. To address this, we explore embedding-based representation learning and evaluate fusion strategies that integrate multiple modalities into a unified feature space. Experiments are conducted across several HPC proxy applications using a leave-one-application-out evaluation setup, with performance measured using Mean Absolute Percentage Error (MAPE). Our findings show that while different modalities capture complementary information, simple fusion approaches do not consistently outperform strong tabular baselines, highlighting the need for more data-aware and adaptive fusion strategies. This work provides insights into the challenges of multimodal representation learning for HPC performance prediction." | Author(s) Nawshin Tabassum Tanny | Faculty Advisors Dr. Tanzima Islam | Department Computer Science | Level Graduate |

Title AI-Powered Stroke Risk Prediction | Abstract "Stroke is one of the leading causes of death and long-term disability worldwide. Early prediction of stroke risk can significantly improve preventive care and reduce healthcare costs. With the increasing availability of healthcare data, machine learning (ML) techniques provide an opportunity to identify high-risk individuals before the onset of severe symptoms. This project aims to develop a predictive model that classifies whether a patient is likely to experience a stroke using demographic and medical attributes. Additionally, we aim to enhance model interpretability using explainable AI techniques inspired by recent research on SHAP-based stroke prediction models. Traditional clinical assessments rely heavily on physician experience and may not fully leverage large-scale patient data. Existing models often lack interpretability, making it difficult to trust healthcare settings. There is a gap in combining accurate prediction with transparent explanations that clinicians and patients can understand." | Author(s) Shivani Narang, Sowmya Balaji, and Deep Sharma | Faculty Advisors | Department McCoy college of Business | Level Graduate |

Title FACTS Multimodal Decomposition Dataset (FMDD): AI-Ready RGB, Metadata, and Weather Benchmark for Postmortem Interval Estimation | Abstract FACTS Multimodal Decomposition Dataset (FMDD) is an AI-ready benchmark derived from 2011-2023 donor placements at the Texas State University Forensic Anthropology Center (FACTS). FMDD couples 741 outdoor RGB decomposition images from 99 donors (0-384 days post-placement) with donor bioprofiles, placement and intake metadata, and harmonized weather records from local stations and open data services. The dataset is curated through a semi-automatic pipeline that integrates foundation-model-assisted full-body segmentation, expert-reviewed masks, image-to-text captions, and standardized tabular preprocessing across donor and environmental streams. We present baseline experiments in body segmentation, image-to-text captioning, and multimodal decomposition-stage classification to demonstrate learnability and to illustrate how FMDD can support PMI estimation, multimodal representation learning, and forensic taphonomy research. | Author(s) Tanzina Akter Tani, Mirna Elizondo, and Stephanie Baker | Faculty Advisors Dr. Jelena Tešić, Daniel Wescott | Department Computer Science & Anthropology | Level Graduate |

Title Bounding box to Segmentation Labeling for Pavement Distresses Using a Closed-loop Platform on 2D/3D Images | Abstract This research aims to reduce the effort required to produce precise segmentation masks for pavement distress analysis. The proposed workflow leverages existing 2D/3D pavement datasets labeled with bounding boxes and uses Segment Anything Model 3 (SAM3) within a local CVAT system to generate initial masks, which are then refined by human experts with much less manual effort. A timing study comparing fully manual segmentation and box-guided SAM3 plus refinement is also included. The approach is particularly useful for rare distress classes because it enables the creation of more detailed annotations without requiring additional distress instances. Initial experiments on failed concrete patch and popout, two of the weakest classes in prior detection tasks, show promising results. Using the refined segmentation labels, a lightweight YOLO segmentation model improved mAP50 from 0.464 to 0.761 for failed concrete patch and from 0.007 to 0.690 for popout. The trained segmentation model will later be integrated back into CVAT to support a feedback loop for improved annotation. Overall, this work improves annotation efficiency, data quality, and practical customization for pavement distress segmentation. | Author(s) Wenhan Tao, and Tanzina Akter Tani | Faculty Advisors Drs. Jelena Tešić, Feng Wang, and Yongsheng Bai | Department Computer Science | Level Graduate |